Currently I can render 6 cube face images and composite them into a cube map or equirectangular panorama, but maybe Vuo could add built-in support (which could maybe also do it more efficiently)?

We also need an easy way to apply effects to the lat-long image without distorting the effect- and hopefully without having to adjust the effect function. This article discusses the issues in processing lat-long images as normal images- specifically the example of blur is given: standard blurring will cause the blur to be greater at the poles and less at the equator.

Article: http://www.cgchannel.com/2015/04/the-foundry-reveals-the-five-key-problems-of-vr-work/

One easy solution is to render the effects with the cube map images (6) then map that into a lat-long.

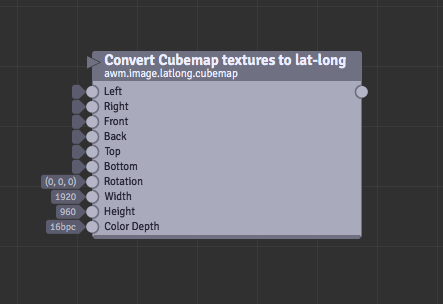

Hey, I have been working on this node for converting 6 cube-map images to lat-long. I have been able to iron out the issues I found.

This currently fills in a need for working with Cube-Map images, which isn’t outlined above. I envision 3 image nodes (additional to the nodes outlined above by @smokris:

image.latlong.cubemapconverts 6 cubemap images → latlong *image.latlong.rotateallows rotating pre-rendered latlongimage.latlong.fisheyeconverts fisheye (or arbitrary FOV) → latlong.image.latlong.2dconverts 2d images to latlong with ability to orientate and zoom

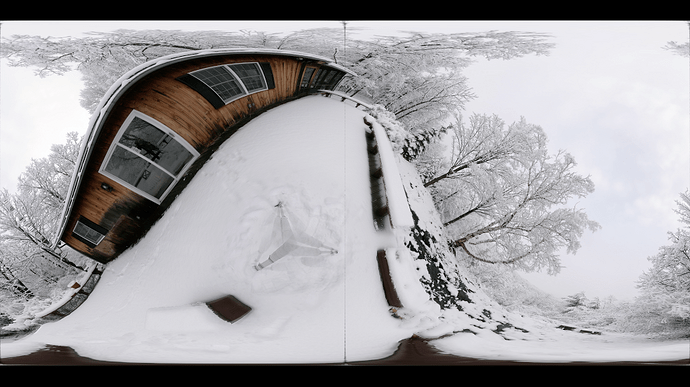

I have attached the node- source & example comp. The cave is a cave I photographed. I would like to know feedback from other Vuo users, as this area is really exciting and special to me.

I haven’t named it vuo.image.latlong.cubemap as I didn’t want to confuse anyone. If Team Vuo wants to use it I give them complete license to do so (MIT style). If there are any name changers that would need to occur please do so. I notice that we are using interchangeably Equirectangular, Cube-Map & Sphere for the same thing.

Just a reply to @smokris from above:

- The six cube face images are then composited into a single 2:1 (equirectangular panorama) image.

- Render Scene to Image uses its width; height is ignored (it’s always width/2)

- Render Scene to Window scales the 2:1 image to fit (or fill?) the window

I thought that to, however when using YouTube for distribution they use a 16:9 ratio: 3840x2160. This is why I have placed a width & height size. Just makes it easier to output to whatever a user needs. If they want 2:1 then either use a maths node or enter resolution. Facebook uses 2:1 currently I think, but they are in the process if redesigning their 360 VR service.

Its also extremely important to get this part of the output correct, as incorrect resolutions (non-matching) may cause issues down the track with missing pixels.

*for dealing with different forms of cube map such as Cross where all images are within one image, then using crop within Vuo could easily solve that situation, however if that is something that people want please comment here.

NOTE: At this point the node does not engage in any form of antialiasing. I am in the process of testing the need for antialiasing from within the node, or if simply using external antialiasing is enough. (such as by rendering out larger and placing an antialiasing filter in front).

cubemap2latlong.zip (3.98 MB)

@alexmitchellmus, thanks for the Convert Cubemap Textures to Lat-Long code! We’ll work on integrating it into Vuo.

NOTE2: I/We really need to finish off the supersampling of this plugin and provide a unified menu to allow it to be switched on / off. This needs to be done in Spherical Co-ordinate space, and not as a post process, (but a post process AA filter for spherical would also be useful).

So, if one was going to use this with VUO scene geometry, they would setup 6 different cameras and output each view to the latlong shader to create a equirectangular output texture?

Has anyone experimented with creating two 180 degree fisheye views of VUO scene geometry in order to get the whole 360, and then converting that to equirectangular? I would be particularly interested in that sort of functionality.

Great so see interest in this node! 360 Vuo is so cool.

Has anyone experimented with creating two 180 degree fisheye views

Yes, I did that to start, however the maths breaks down on the edges of the circle, so you end up with 2 square boxes on both hemispheres, (when viewed lat-long). So each hemisphere shows stitching lines. We could add another view and get rid of many lines. However there would always be a pole issue.

Here is an example, the 360 isn’t my own image, and I made this test about a year ago. Just for educational purposes only. Using 6 cameras looks like the best way to go.

This hasn’t got much support in votes, but I think that given the relevance of various VR and 360 platforms (Oculus, FB, Youtube, Vimeo, etc)… it would be really cool to be able to have some stock support for this, if it could in fact be more heavily optimized with a specific node for it, than Alex’s method. I suspect it could be.

No, I have wound up just finding it more expedient to do equirectangular with raymarched scenes. But that has its limits.

I had some trouble getting the sdk installed on a new setup, so I haven’t been able to follow up and try to get a custom node working.

In Vuo 2.0.0 we added the Convert Cubemap to Equirectangular node. Thanks, @alexmitchellmus, for providing your source code.

This feature request remains open for voting, for implementing the nodes listed at the top of the page.

I initially tried the dual fisheye approach, as mentioned earlier there are issues with what happens on the rim.

I uploaded my equirectangular camera here

https://community.vuo.org/t/-/6454#comment-6961

Not sure of the relative merits.

IIRC dual fisheye will always have errors around the perimeter. Hence the need to go with a 90° cubemap rendering.

I think this is what the current Vuo2 code does, but I haven’t had time to check.